Key Takeaways

- A product data management process has five steps: intake, validation, enrichment, distribution, and ongoing maintenance. Most data quality problems trace back to gaps in one of them.

- The maintenance step is the one most companies skip. Published product data degrades without a structured review cycle and named data stewards.

- Bad data moving between systems, from ERP to PIM or supplier to catalog, is the most common source of catalog errors in practice. Automated integrations remove the version gap that manual exports create.

- A PIM system enforces the process. It does not replace it. Getting the process logic right before selecting tooling is what separates working implementations from organized messes.

Poor product data is expensive. Gartner research puts the average annual cost of bad data quality at $12.9 million per organization. For manufacturers and distributors, the damage is specific: wrong specs cause returns, incomplete attributes keep products out of search results, and inconsistent data across channels erodes buyer trust. Products also take longer to reach market when the data needed to list and sell them is incomplete or stuck in someone's inbox.

Most companies know they have a product data quality problem. Fewer have a product data management process that actually prevents it from recurring. The difference between the two is structural, not cosmetic.

What the Product Data Management Process Actually Covers

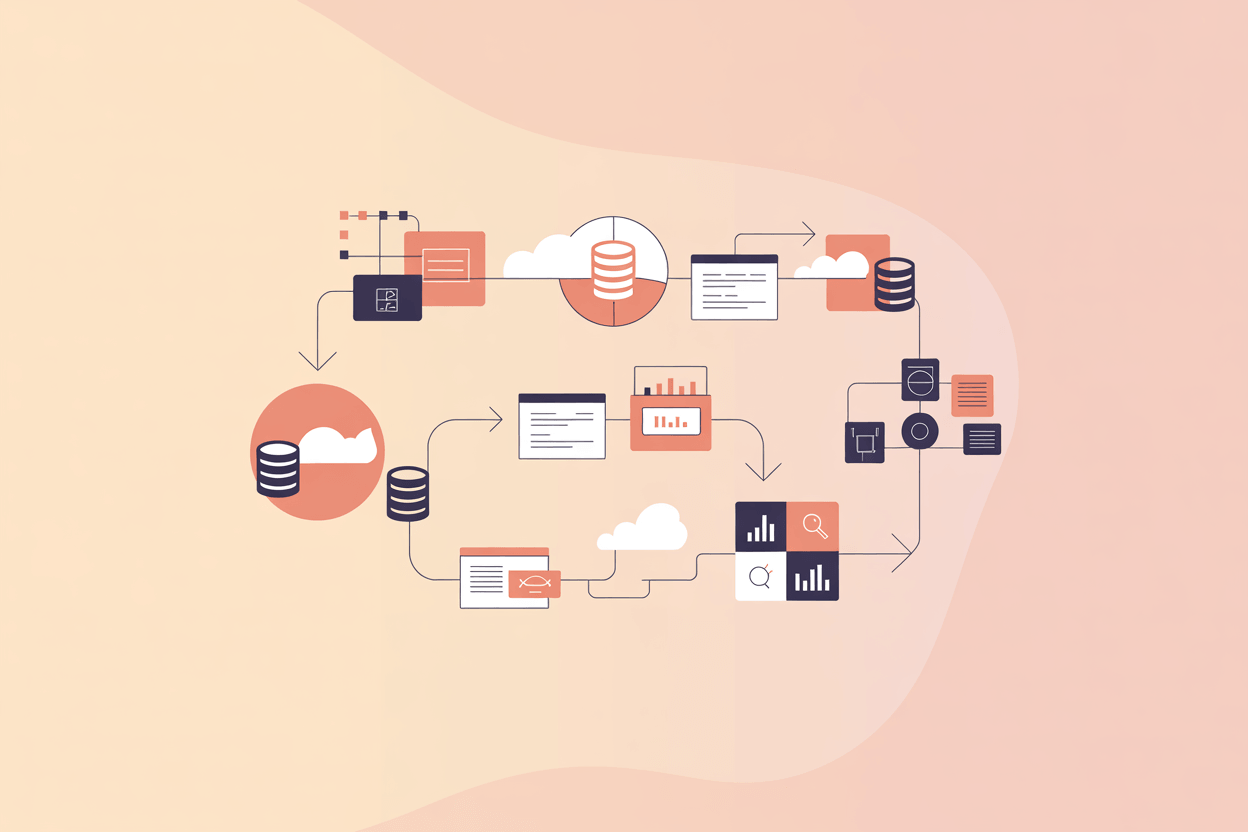

Product data management (PDM) is the set of steps and rules your organization uses to collect, validate, enrich, store, and distribute product information. It covers everything from how a new product record gets created to how an approved change reaches every sales channel.

PDM sits inside a broader product lifecycle, but it focuses on one specific question: is the product information accurate, complete, and available where it needs to be? Product lifecycle management (PLM) governs the full span from design to retirement. PDM is the discipline within that span that keeps the underlying product data accurate and usable at every stage.

The engineering world has used PDM for decades to manage CAD files, bills of materials, and design revisions. For manufacturers and distributors selling through digital and physical channels, the same principles apply to commercial product data: attributes, digital assets, descriptions, pricing, and compliance documentation. The data that comes out of engineering becomes the master data that marketing, sales, and channel teams depend on. The product data management process is what keeps those handoffs from breaking.

PDM is closely related to product information management (PIM), but the two are not the same thing. PDM manages the full scope of product data across the organization and its lifecycle. PIM's focus is narrower: enriching and distributing product content to commercial channels. In practice, a well-structured PDM process feeds into a PIM system, which handles channel-specific enrichment and syndication.

The process is not a software feature. You can have the most capable PIM system on the market and still produce bad data if the underlying process is broken. Software enforces process. It does not replace it.

The Core Steps of the Product Data Management Process

A functional product data management process moves through five distinct phases. They do not need to be elaborate, but they do need to be explicit. How fast products reach market, and how accurately they are represented when they do, depends largely on how well these steps are defined and enforced.

1. Data collection and intake

Every product record starts somewhere. For manufacturers, it often starts in engineering or procurement. For distributors, it comes from supplier data sheets, EDI feeds, or Excel files. The intake step defines what is required before a record can move forward and who is responsible for providing it.

In projects we implemented for distributors managing 20,000-plus SKUs, the intake step was the weakest link. Supplier data arrived in inconsistent formats, with missing fields and conflicting values across product families. The fix was an automated ingestion pipeline: receive the file, map it to the internal data model, validate against completeness rules, and flag anything below threshold before it enters the catalog. What used to take a week of manual cleanup was reduced to a two-hour review of flagged exceptions.

2. Validation and data quality checks

Intake gets the raw data in. Validation ensures it meets your standards before anything moves downstream.

This step runs automated checks against defined rules: mandatory fields, value formats, attribute ranges, and consistency across related products. Validation is not optional and not manual. If your team is opening records one by one to check for missing images, the process is already failing.

Set hard stops for critical fields and soft warnings for preferred fields. A product without a main image should not be publishable. A product without a secondary image might be acceptable depending on category. The rules differ by product type and channel requirements.

3. Data enrichment

Enrichment is where raw product data becomes commercially useful. Technical specifications get translated into buyer-facing language. Digital assets are attached, product relationships get mapped, and channel-specific content variants are created for each target market or distribution channel.

Most of the human effort in the process sits here, and clarity of ownership matters most at this stage. A procurement manager entering a new component should not also be writing the marketing description for it. Those are different skills, different sources of information, and different approval chains.

AtroPIM handles this through configurable workflows and role-based access. A product record can move through defined stages: draft, enrichment, review, approved, published. At each stage, the right team has the right access. Translators do not touch pricing. Marketing does not touch engineering specs. Nobody publishes without the review step being completed.

4. Distribution and channel publishing

A product record approved in your PIM still needs to reach the right place in the right format. A B2B portal requires detailed technical attributes. Marketplace listings have character limits and specific field mappings. Print catalogs need assets at print resolution, not screen resolution.

Managing these separately is the source of most channel inconsistency. A single description change can require eight separate edits if product content is maintained per channel in isolated files.

The process step here is syndication: define channel profiles once, map your master data fields to each channel's requirements, and publish automatically. When the master record changes, all channels update from the same source.

In AtroPIM, channel profiles define the field mapping and format requirements for each output. The update happens once at the master record level. What reaches each channel is determined by the profile, not by whoever runs the export that day.

5. Ongoing maintenance and governance

Product data degrades constantly: suppliers update specifications, regulations tighten compliance requirements, and market positioning shifts the language used to describe products. A product data management process without a maintenance loop produces increasingly stale data, and stale data compounds quietly until a return, a complaint, or a failed audit makes the cost visible.

Version control and change management belong in the daily workflow, not as an afterthought. Every approved change to a product record should be tracked: what changed, who approved it, and when. That audit trail matters for regulatory compliance, for quality assurance, and for tracing problems back to their source when something goes wrong downstream.

Data governance at this stage is not a policy document. It is a set of operating rules: who triggers a change request, who reviews it, who approves it, and which downstream channels are updated automatically when the change goes live. Schedule periodic audits by category. Assign data stewards with ongoing accountability for accuracy, not ownership that ends once the record is created.

Our customers in the building materials sector found that quarterly category reviews caught roughly 15 to 20 percent of records with outdated attributes, mostly driven by supplier specification updates that had been applied in the ERP but not reflected in the product catalog.

Where the Product Data Management Process Breaks Down

Three failure modes account for most product data problems in practice.

Unclear data stewardship is the most common root cause. If nobody is specifically accountable for a product category's data quality, quality drifts. Ownership needs to be named, not assumed. Data governance is often described as a policy problem, but in practice it is an accountability problem. Policies without named owners produce nothing.

Manual data movement between systems is the second failure mode. Every time someone exports from an ERP, modifies in Excel, and imports to a PIM, there is a version gap. That gap is where errors enter. A product gets repriced in the ERP but the old price stays in the catalog. A technical specification changes in engineering but the updated value never reaches the channel. Automated integrations close the gap. AtroPIM's REST API follows the OpenAPI standard, which means integration with ERP systems, e-commerce platforms, and supplier portals can be built and documented without proprietary tooling.

Treating publication as the end of the process is the third. Once a product is live, it tends to get ignored until something goes wrong: a customer complaint, a return, a failed compliance audit. By then the cost of fixing the data is significantly higher than catching it during a scheduled review. Publishing a product is not the last step. It is the beginning of a maintenance obligation.

The Role of a PIM in the Product Data Management Process

A PIM system is not the process itself. It is the infrastructure that makes the process enforceable and scalable.

Without a PIM, process steps exist as informal agreements: a shared understanding that someone will check the specs before publishing, that supplier data gets reviewed before it enters the catalog, that translation is done before the German channel goes live. Informal agreements work when teams are small and catalogs are short. As soon as either grows, time to market extends, errors compound, and channel inconsistencies become the default rather than the exception.

The practical benefit of a PIM is that it turns the process into a system. Master product data lives in one place, workflow stages are defined with role-based access at each step, and validation catches errors at intake rather than after publication. Distribution to channels is automated, with format requirements handled by channel profiles rather than by whoever runs the export that week. Workflow automation replaces the informal coordination that breaks down at scale, and makes the product data management process auditable rather than approximate.

AtroPIM is built on the AtroCore data platform, which means it is not limited to managing product records. It supports any structured data, integrates with external systems via REST API, and handles business process management through configurable workflows.

For manufacturers with complex product hierarchies and distributors managing multi-supplier catalogs, this matters. You are not mapping your catalog to a fixed data model. You configure the data model to fit your catalog, including custom attributes, nested product relationships, and classification taxonomies that match how your products are actually structured.

The open-source foundation means you deploy it on your own infrastructure if data residency or security requirements demand it, or you take a SaaS deployment to avoid the maintenance overhead. You start with what you need and add modules as the catalog grows.

Where to Start with Your Product Data Management Process

If your current product data management process relies on spreadsheets and informal coordination, a full PIM implementation is the right direction but not always the right first step. Before selecting tooling, get clear on three things:

- What are your mandatory fields per product type?

- Who is the data steward for each product category?

- What does your approval workflow look like before a product goes live?

Those three questions expose most process gaps and define the governance structure your tooling will need to enforce. Map the process first, then implement the tooling that makes it stick.

Companies that implement a PIM without answering those questions end up with a well-organized repository of incomplete and inconsistently maintained product master data. The tooling is only as reliable as the process it runs.