Content silos are one of those problems that grow quietly. A team adopts a new tool. Another team keeps using the old spreadsheet. The e-commerce platform gets its own product database. Nobody plans for fragmentation; it just accumulates.

By the time the problem becomes visible, it is already expensive. Product descriptions contradict each other across channels. Updates made in one system take days to reach another. Multiple people in different departments are manually re-entering the same data. Customers get inconsistent information depending on where they look.

MIT Sloan research shows companies lose between 15% and 25% of annual revenue due to poor data quality (source). Gartner puts the average annual organizational cost at $12.9 million (source). Product information is one of the most fragmented and highest-impact data categories for any company selling across multiple channels.

What Are Content Silos?

In the context of product data, content silos are isolated repositories of product information that exist across disconnected systems within the same organization. Marketing manages descriptions in a CMS. Sales stores specs in a CRM. The warehouse runs its own inventory system. The e-commerce platform maintains its own product database. Each system holds a piece of the product record, but none holds all of it, and none is consistently in sync with the others.

The result is that product information becomes fragmented by department rather than organized around the product itself. No single version of the record is authoritative. Updates made in one system do not automatically reach others. The longer this structure persists, the wider the gaps between versions become, and the more expensive they are to close.

This is different from the SEO concept of content silos, which refers to organizing website pages into thematic clusters for search engine visibility. That is a structural strategy. What we are describing is an organizational data problem with direct operational and commercial consequences. The rest of this article is about what causes it, what it costs, and what fixing it actually requires.

How Content Silos Form

Most product data fragmentation does not happen because of bad decisions. Each department solves its own problem independently. Marketing picks a CMS that gives them editorial flexibility. Sales attaches specs to CRM records because that is where they already work. The e-commerce team integrates product data directly into the platform because the sync needs to be fast. Each choice is reasonable. Collectively, they create a situation where no single version of the product record is authoritative.

The symptoms become clear once you know what to look for, and from experience, they follow a predictable pattern. A product page shows one weight, the PDF datasheet shows another. A customer calls after reading wrong compatibility information from a partner portal. A product launch slips because coordinating updates across five systems takes two weeks instead of two days. The marketing team rewrites a description that the product team updated last month, because nobody told them it had been changed.

Our customers often describe the same situation before they started working with us: a growing catalog, an expanding channel count, and a data management workload scaling faster than the team.

What Centralized Product Data Actually Means

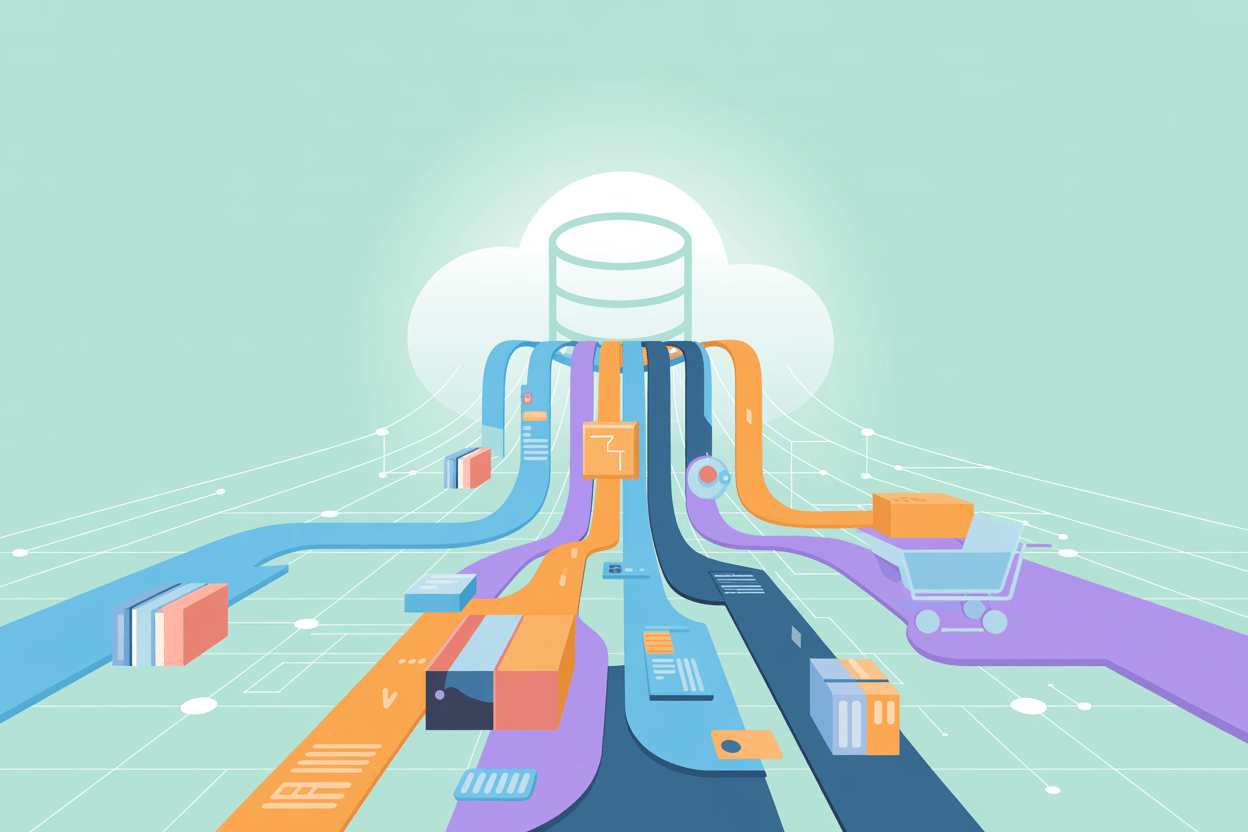

Centralized product data means one authoritative repository that all other systems draw from. Not one interface that everyone uses. Not a migration that eliminates every tool. The website, the marketplace feeds, the sales tools, the print catalog workflow — these all stay. What changes is where the data originates.

The central repository holds the master product record: descriptions, attributes, specifications, images, categorization, pricing references, regulatory data, and any other fields the business needs. Downstream systems consume this data through integrations. When something changes in the master record, it propagates to all connected systems automatically.

In practice, this matters most at the moment something changes. A supplier updates component specs. A regulatory requirement adds a new mandatory field. A brand refresh changes how a product line is described. Without a central record, that change has to be tracked down and applied in every system separately, and someone, somewhere, will miss one. With a central record, the change happens once and propagates automatically.

This is the core function of a Product Information Management system. A PIM sits between the data sources and the distribution channels — the single point where product information is created, validated, enriched, and approved before it goes anywhere. That single point of control is what turns centralized product data from a concept into something operational.

AtroPIM is built on this principle. The platform manages the full product record in one place and connects to downstream systems through a REST API, with documentation generated per instance according to OpenAPI standards. In practice, that means channel-specific variants, localization, and attribute-level access controls are all handled within the same system, without external middleware for the core data flows.

The Operational Cost of Content Silos

The direct costs of siloed product data are easier to measure than most teams expect. Time spent searching for the current version of a file, re-entering data that already exists somewhere else, correcting errors that reached customers because an update did not propagate — these are quantifiable hours. In projects we implemented for manufacturers managing several thousand SKUs across multiple markets, the pre-PIM audit almost always reveals the same thing: a significant share of product management time goes to coordination and correction rather than to actually improving the data.

Return rates are a reliable indicator. According to the Forrester Wave report on Product Information Management (Q2 2021), 18% of US shoppers returned an item bought online because the product description was inaccurate. Returns already cost retailers over $890 billion annually according to the National Retail Federation. Inaccurate product content is a direct contributor to that number, and it is one of the more fixable causes.

AtroPIM users track a measurable drop in returns within months of centralizing product data, because the specifications customers see on the website finally match what they receive. The fix is not better copywriting, but closing the gap between the authoritative spec and the published one.

Product launch timelines are the other measurable casualty. When a new product requires coordinated updates across a website, three marketplace accounts, a printed catalog, a sales configurator, and a partner portal, the launch date is set by the slowest system in the chain. With centralized product data and automated distribution, the bottleneck shifts from coordination to content creation, which is where it should be.

Data Quality as a Structural Problem

Poor product data quality is usually treated as a content problem. Teams hire writers, run audits, create style guides. These help, but they address the symptom rather than the cause. If the same product attribute exists in four systems with no defined owner and no validation rule, it will drift. The people maintaining it are not careless. There is simply no mechanism keeping it consistent.

Centralization makes data quality a structural property rather than a maintenance task. Validation rules enforce completeness before a record can be published. Workflow stages route changes through review before they reach any channel. Attribute ownership is assigned, so there is always a defined person responsible for a given field, and version history tracks what changed and who approved it. Enforcing these rules at the data layer, rather than catching problems after publication, is what separates centralized product data from simply having a tidy spreadsheet.

AtroPIM supports configurable validation at the attribute level. Required fields, acceptable value ranges, format rules, and conditional logic can all be enforced before a record moves downstream. The built-in DAM in AtroCore manages assets as part of the same record rather than as a separate system, so the product record stays coherent from the specification through to the final published asset.

Governance Is Not Optional

PIM helps a lot, but that's not all. Organizations that implement a PIM without governance frameworks get inconsistent results. The technology works, but without defined ownership, approval workflows, and quality standards, the new system starts accumulating the same problems the old one had. Different people interpret attributes differently. Fields get populated inconsistently. The single source of truth becomes a slightly tidier version of the original fragmentation.

In projects where data migration was treated as the finish line, within six months, the catalog drifts again, not because the platform failed, but because nobody owned the attributes after go-live.

Governance means deciding, before data migration begins, who owns each attribute, what values are acceptable, what the approval process looks like for different change types, and what quality standard a record must meet before it can be distributed. This is not complicated, but it requires cross-functional alignment that technology alone cannot provide.

The organizations that get the most out of centralized product data treat it as an ongoing capability, not a one-time implementation project. Catalogs change. Channels change. Markets change. A governance model that can adapt is worth more than an initial setup that is perfect on day one and brittle afterward.

The Channel Dimension

The pressure on governance scales directly with channel count. A business selling through one website and one marketplace has limited exposure to inconsistency. A manufacturer distributing through a direct website, three regional marketplaces, a network of resellers, a B2B portal, and a print catalog faces a coordination problem that manual processes cannot reliably solve, and where a governance gap compounds fast.

Each channel has its own requirements. Marketplaces enforce specific attribute formats and completeness thresholds. Print workflows require assets in defined formats. Reseller portals need channel-specific pricing and localized descriptions. Centralized product data handles these variations by storing the master record once and applying channel-specific transformation rules on output, rather than maintaining separate product records per channel. New channel launches become significantly less painful as a result. Without centralization, adding a marketplace or regional storefront means a new data migration and a new source of inconsistency. With a central record and a configurable output layer, it is a configuration task, not a project.

According to Mordor Intelligence, the global PIM market is valued at $19.95 billion in 2026 and is projected to reach $37.39 billion by 2031, growing at a 13.38% CAGR. Cloud deployments account for 63.5% of that market and are growing fastest — consistent with what we see in practice. The shift away from on-premise infrastructure has lowered the barrier to adoption significantly for mid-market companies.

How to Break Down Content Silos

The common mistake is starting with tool selection. The right sequence is the opposite: understand the current state of your product data first, then define what you need, then evaluate platforms.

Start with a data audit. It does not need to be exhaustive to be useful:

- Map where product information currently lives

- Identify who maintains each type of data

- Document where the most damaging inconsistencies occur

- Prioritize channels and product categories where errors have the most direct impact — whether that is return rates, lost sales, or compliance risk

Starting with a low-visibility category wastes the pilot's potential to build internal momentum and surface real governance gaps.

Run a pilot before scaling. One product category, one channel, or one market. The goal is not to prove the technology works, but to:

- Refine the data model

- Test the governance process

- Build internal understanding of what centralization actually requires before it applies to the full catalog

Select an area where the pain is obvious and measurable: a product line with high return rates, or a channel where update delays have caused visible problems.

Define attribute ownership before migration begins. Every field needs a responsible role, and this is the step most teams skip because it feels premature before the system is live. In practice, the teams that skip it spend the first year after go-live re-litigating decisions that should have been made before the first record was imported. A few principles to set before launch:

- Quality thresholds should reflect business impact, not theoretical completeness

- Channel connectors are a product data concern — the people who know what each channel requires should configure the output rules, not just the engineers building the connection

Treat change management as a core deliverable. Teams that have managed product data through spreadsheets and email for years have established habits, and those habits do not disappear when a new system goes live. The most common failure mode is training people on how to use the platform without explaining why the process is structured the way it is. When people understand the logic (why attributes have owners, why changes go through review, why the data model is built the way it is), adoption follows much more naturally.

What Changes After Centralization

The operational changes are tangible. Product launches that previously required multi-week coordination cycles are compressed to days. Attribute updates propagate to all channels in hours rather than weeks. New channel onboarding becomes a configuration task rather than a data migration project.

The less obvious change is what teams stop doing. The hours spent reconciling conflicting product records, chasing down the current version of a file, correcting errors caught by customers rather than by internal review — these disappear. The time shifts toward content improvement, market analysis, and channel expansion.

In our recent project implemented for a mid-sized manufacturer, the product team estimated they recovered roughly two full working days per week within the first quarter after go-live (time that had previously gone to data coordination rather than data improvement).

Manufacturers we have worked with describe a consistent transition: in the first months after centralizing product data, the main activity is cleanup. Errors that had accumulated across systems become visible and get corrected. After that phase, the work shifts to enrichment. With a reliable foundation, it becomes practical to systematically improve attribute completeness, add localized content, and build channel-specific variants — work that was always on the roadmap but never quite reached because the maintenance burden left no room for it. That is the practical difference between managing fragmented data and owning it.